I look at Blade Runner as the last analog science-fiction movie made, because we didn’t have all the advantages that people have now. And I’m glad we didn’t, because there’s nothing artificial about it. There’s no computer-generated images in the film.

— David L. Snyder, Art Director

Any movie that gets a “Five-Disc Ultimate Collectors Edition” deserves serious attention, even in the midst of a busy semester, and there are few films more integral to the genre of science fiction or the craft of visual effects than Blade Runner. (Ordinarily I’d follow the stylistic rules about which I browbeat my Intro to Film students and follow this title with the year of release, 1982. But one of the many confounding and wonderful things about Blade Runner is the way in which it resists confinement to any one historical moment. By this I refer not only to its carefully designed and brilliantly realized vision of Los Angeles in 2019 [now a mere 11 years away!] but the many-versioned indeterminacy of its status as an industrial artifact, one that has been revamped, recut, and released many times throughout the two and a half decades of its cultural existence. Blade Runner in its revisions has almost dissolved the boundaries separating preproduction, production, and postproduction — the three stages of the traditional cinematic lifecycle — to become that rarest of filmic objects, the always-being-made. The only thing, in fact, that keeps Blade Runner from sliding into the same sad abyss as the first Star Wars [an object so scribbled-over with tweaks and touch-ups that it has almost unraveled the alchemy by which it initially transmuted an archive of tin-plated pop-culture precursors into a golden original] is the auteur-god at the center of its cosmology of texts: unlike George Lucas, Ridley Scott seems willing to use words like “final” and “definitive” — charged terms in their implicit contract to stop futzing around with a collectively cherished memory.)

I grabbed the DVDs from Swarthmore’s library last week to prep a guest lecture for a seminar a friend of mine is teaching in the English Department, and in the course of plowing through the three-and-a-half-hour production documentary “Dangerous Days” came across the quote from David L. Snyder that opens this post. What a remarkable statement — all the more amazing for how quickly and easily it goes by. If there is a conceptual digestive system for ideas as they circulate through time and our ideological networks, surely this is evidence of a successfully broken-down and assimilated “truth,” one which we’ve masticated and incorporated into our perception of film without ever realizing what an odd mouthful it makes. There’s nothing artificial about it, says David Snyder. Is he referring to the live-action performances of Harrison Ford, Rutger Hauer, and Sean Young? The “retrofitted” backlot of LA 2019, packed with costumed extras and drenched in practical environmental effects from smoke machines and water sprinklers? The cars futurized according to the extrapolative artwork of Syd Mead?

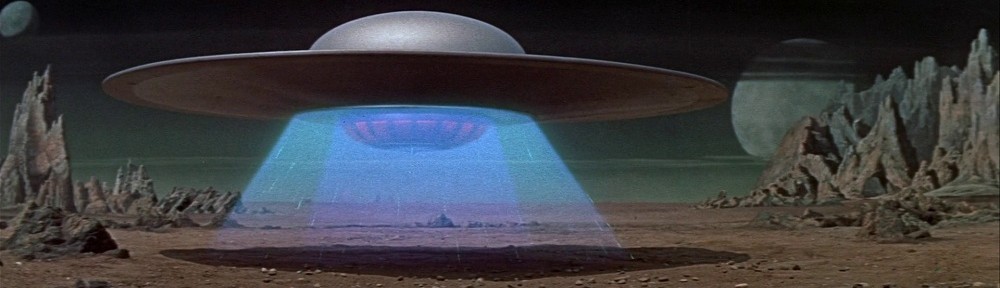

No: Snyder is talking about visual effects — the virtuoso work of a small army headed by Douglas Trumbull and Richard Yuricich — a suite of shots peppered throughout the film that map the hellish, vertiginous altitudes above the drippy neon streets of Lawrence G. Paull’s production design. Snyder refers, in other words, to shots produced exclusively through falsification: miniature vehicles, kitbashed cityscapes, and painted mattes, each piece captured in multiple “passes” and composited into frames that present themselves to the eye as unified gestalts but are in fact flattened collages, mosaics of elements captured in radically different scales, spaces, and times but made to coexist through the layerings of the optical printer: an elaborate decoupage deceptively passing itself off as immediate, indexical reality.

I get what Snyder is saying. There is something natural and real about the visual effects in Blade Runner; watching them, you feel the weight and substance of the models and lighting rigs, can almost smell the smoky haze being pumped around the light sources to create those gorgeous haloes, a signature of Trumbull’s FX work matched only by his extravagant ballet of ice-cream-cone UFOs amid boiling cloudscapes and miniature mountains in Close Encounters of the Third Kind. But what no one points out is that all of these visual effects — predigital visual effects — were once considered artificial. We used to think of them as tricks, hoodwinks, illusions. Only now that the digital revolution has come and gone, turning everything into weightless, effortless CG, do we retroactively assign the fakery of the past a glorious authenticity.

Or so the story goes. As I suggest above, and have argued elsewhere, the difference between “artificial” and “actual” in filmmaking is as much a matter of ideology as industrial method; perceptions of the medium are slippery and always open to contestation. Special and visual effects have always functioned as a kind of reality pump, investing the “nonspecial” scenes and sequences around them with an air of indexical reliability which is, itself, perhaps the most profound “effect.” With vanishingly few exceptions, actors speak lines written for them; stories are stitched into seamless continuity from fragments of film shot out of order; and, inescapably, a camera is there to record what’s happening, yet never reveals its own existence. Cinema is, prior to everything else, an artifact, and special effects function discursively to misdirect our attention onto more obvious classes of manipulation.

Now the computer has arrived as the new trick in town, enabling us to rebrand everything that came before as “real.” It’s an understandable turn of mind, but one that scholars and critics ought to navigate carefully. (Case in point: Snyder speaks as though computers didn’t exist at the time of Blade Runner. Yet it is only through the airtight registration made possible by motion-control cinematography, dependent on microprocessors for precision and memory storage for repeatability, that the film’s beautiful miniatures blend so smoothly with their surroundings.) It is possible, and worthwhile, to immerse ourselves in the virtual facade of ideology’s trompe-l’oeil — a higher order of special effect — while occasionally stepping back to acknowledge the brush strokes, the slightly imperfect matte lines that seam the composited elements of our thought.

So we’re getting to that proposal soon, then?

😉

If I may go off on a slightly different tangent of your post:

I think your point about films being revamped, re-cut, re-released, is lately applying to everything — or at least anything the studios think they can make an extra buck on. With these continuous DVD releases of “Extreme Editions” and such, now often done without any of the filmmakers involved, I’m seeing a real problem with the archiving of the “original” cuts, because — what exactly does that mean these days? I myself would classify the “theatrical cut” as always the baseline to operate from in terms of analyzing a release, however, I’d say over 50%, perhaps more, of popular films these days out of Hollywood are now altered in some fashion, sometimes minutely, sometimes significantly, by the time they reach DVD or cable — if not in their immediate release, surely within a few months or a year or two. Actually, it’s common now that digital “touch-ups” are done to releases that “didn’t have enough time” to finish everything in “post” just before their theatrical debut. It’s actually yet another one of these contemporary problems in industry that really frustrates me in a critical way.

I used to think I’d always want what was called the “director’s cut” — but these days I’m not so sure. It’s called “The Donner Cut” yet we find out Michael Thau actually did most of the grunt work on it. Is Scott’s uncut “Kingdom of Heaven” significantly better than the underwhelming theatrical cut I saw, with tepid lead performances and Edward Norton under a mask? I don’t know, but I wasn’t interested enough in the theatrical cut to give a hoot. I still haven’t seen the “Spider-Man 2.5” DVD and I don’t think I’m going to — the original film was pretty perfectly done, as far as I was concerned. Internet fanboys, DVD reviewers, and even academics sometimes love to “rail” against the studios, but really, these studios wouldn’t have been in business for as long as they have without being right *more* than some of the time. Sometimes they’re *very* right about a “theatrical cut” — unfortunately, now that home video revenues proved to be a huge boost to their fortunes, they’ve raided their own libraries and continue to flood the market with unneeded “extended cuts” or “restored cuts” of movies that for the most part were “OK” just the way they were — how many DVD/video versions of “Evil Dead” have there been — 35?? But this isn’t just genre products — it happens with everything, from “Wedding Crashers” to Orson Welles (who I’m all for getting what was “intended” out — but how would we ever truly know? From some notes he left? Even if they were extensive? Maybe he would have changed his mind, too, who knows?).

And yes, perhaps “Blade Runner” is the king, or to put it another way, one of the top offenders, in this new “game” — although it hasn’t been released as many times as “Star Wars”, yet (it’s about tied with the number of “Godfather” DVDs out there, though). Ridley Scott, nor Fox (a studio is lately notorious for mucking with its own genre projects) for years (years!) couldn’t settle on a “definitive cut” for this movie. I’m very interested in watching in-depth documentaries like the one you mention on the “Blade Runner” set — I’m just sick of having to sift through three-to-five versions of a movie before I can feel like I’ve seen the cut that “they” want me to see. I don’t know quite what to make of Blade Runner (I’m an oddball, I’ve never been a huge fan, though I can recognize it’s achievements in visual design), but all this “re-issue, re-package, re-package, buy both and feel deceived” culture (to quote a somewhat famous singer — has, for me, almost killed the enjoyment of buying films for home viewing anymore. Even the “Superman” DVD cuts confuse me these days — they’re really not very much different!

Theoretically, yes, the studios tend to often “own” the final product, and are usually within their rights to do whatever they wish with it following it’s release, but now filmmakers *themselves* seem to be dilly-dallying more and more frequently — has technology, yes, digital technology, brainwashed these “auteurs” into thinking that they can and should continually much with their own pieces of art, and that we’ll keep buying them over and over and over again? Yes, even some directors I love — Alex Proyas and Guillermo del Toro — are now guilty of this. Mr. Proyas: “Dark City” was perfectly brilliant in the cut which was released in 1998 and on DVD soon after, and its use of visual effects holds up surprisingly well, I’d argue — you didn’t need to do anything at all with it (except perhaps remove that spoiling opening narration).

More and more, I am appreciating filmmakers who seem to have the integrity *not* to go back and re-visit their own movies — even when they want to. Unfortunately, most of them don’t live in Hollywood. I’m looking at you, Michael Winterbottom, Patrice Leconte, Steven Soderbergh (who’s never “re-cut” “Sex, Lies and Videotape” once!), Phillip Noyce, Spike Lee (say what you will about his controversial career, but he’s never re-cut his movies), Beat Takeshi, Ang Lee (no matter how much “Hulk” forced Universal execs into a stunned silence at an early screening, Ang didn’t re-cut it — and wasn’t forced to — and still hasn’t re-visited it, and probably never will!). Bob, did Bergman ever re-cut any of his films? Did Godard (perhaps someone can enlighten me whether he’d believe in this sort of thing)?

“Always being made” indeed.

Now, as for special effects…

Great thoughts on BR and the “artificial,” Bob. I always point out to students the fallacy of the “natural” when assessing any cultural artifact (though I still grant that Bazin had a point about what’s capture and not captured by the camera). Every sight and sound in every media text out there has been removed from “nature” multiple times.

That said, I think Snyder’s comment is fascinating because of the line it draws between what have really long been pretty permeable production modes (“computer-generated” vs. not). This distinction matters more to practitioners and critics than to studios and viewers, and has long roots in the cultural positioning of every form of art. It enables sloppy reasoning (“I hate CGI!” kinds of blanket rants), but it also enables compelling arguments (e.g., Dogme 95 films).

To be quite specific to the Hollywood visual-effects realm, the invocation of the moving line between “nature” and “artifice” tries to align particular people and projects with traditions of craft and/or art, vs. commerce, with “CGI” really standing in to the more specific issue of “bad CGI.” I personally can’t stand “bad CGI,” and I think it’s made too many recent action and/or genre films near unwatchable (I’m looking at you, Spider-Man 3). However, I’m all for “good CGI” at any level (subtle to, say, Speed Racer). When particularly people go after “CGI,” they’re really lumping the good in with the bad, and (as you point out) conveniently forgetting how much the entire endeavor of filmmaking is dependent on technology.

Michael: thanks for these thoughts on the always-being-made. I feel your pain! What’s interesting to me is how this “version crisis” reflects a basic tension between our belief (our need to believe) in a singular, authored artwork and the ruthless reproductive drive of movie commerce, always seeking to take familiar pleasures, cherished texts, and differentiate them just enough to mint a new product. Surely this is a function of the rental and collectible market — from VHS to laserdisk, DVD, now Blu-Ray and downloads — which shift the emphasis from the shared theatrical experience to the privately-owned box on a shelf. Yet that moment of first encounter remains, for most cinephiles, the “definitive” experience against which all updates and revisions seem like soulless dilutions. Confronted with the version, we glimpse the skeleton beneath the skin of industrialized art. The question is, will each new generation produce its own impressions of originality as they encounter texts for the first time? Or is the whole idea of the singular original dissolving into an endless series of products? (Didn’t Walter Benjamin write an essay about this?)

Derek: thanks for your comments. I too use Bazin as a corrective to the idea that “everything is artificial” — a position so sweeping that it might as well be “everything is real.” In this regard, I really like Phil Rosen’s Change Mummified, the last chapter of which deftly deconstructs claims about the digital’s supposed break with indexicality.

I’d also agree that the distinction (or perception of it) between CG and pre- or non-CG has fueled many a rant, alongside more nuanced responses. (Do you know the late David Foster Wallace’s essay on Terminator 2 and “FX porn”?) Here I think Shilo McClean’s Digital Storytelling is useful; though the author engages in the same convenient forgetting as most scholars of digital effects (she writes as though visual effects largely didn’t exist before the computer), her main argument is valid, mapping FX not in terms of an artificial/natural divide but through how successfully they integrate with and support traditional narrative forms — “good” storytelling.

Thanks again, Bob, for an insightful post, and Mike and David’s comments are great back-up.

I’l put in a good word for Ridley Scott – his director’s cuts of Alien and Blade Runner (the one that removed the voice-over, the trees and stuck in a unicorn) were shorter than the originals. Amen to that. I remember one review of Costner’s The Postman (apologies to anyone who’d succeeded in forgetting it) that said “I suggest, when the inevitable director’s cut comes around, losing half an hour from the beginning and an hour from the end.” How much time do these people think we have for their “vision”?!

I’m glad you guys pointed to Bazin. I’ve been reading Bazin all day, with the vague sense that I wanted to make sure my students didn’t dismiss him as a straw man who was wrong about his ontological claims for cinema. It’s not easy when he proffers that teleological argument about cinema’s vocation for realism and its unique ability to appeal to a human desire for resemblance. Where I suspect he retains relevance is in the minds of people like Snyder who evoke artifice as meaning something that never had a profilmic existence. There’s definitely a discourse around CGI that says it doesn’t have the same sense of “presence” as animatronics, even though those puppets may have less articulation than a performance-captured CG figure. I tend to agree, but I’m not sure on what grounds I’ve based that agreement. A good is example is the difference between Alien and Alien 3. The former is so much more frightening than the latter, but is it because the cable-operated or man-in-a-monster-suit creatures are inherently more vivid than the sleek CG beasts of Fincher’s movie, or is it just that the films as a whole are differently mounted? I wonder if cognitive film theory has anything to offer on this, and if our extratextual knowledge of the film’s making has any input to that sense of presence (i.e. we know that the puppet is profilmically present, even if we don’t perceive the CGI version as conversely absent).

I don’t know about the USA, but in the UK the fad is for “ruder, uncut” versions of gross-out comedies on DVD – the “unseen edition” or whatever. I have no doubt that they just hold back some T&A from the theatrical release to give them something to shout about on the video circuit. The Region 1 release of The Big Sleep, to take this back a few years, has footage from the first release of the film, which had a lot more plot exposition and was missing some of the saucy dialogue from the later, more famous release. Warners were worried that their heavily invested new starlet’s career was off to a bad start and wanted to give her more big scenes that would capitalise on the offscreen love affair between the two headliners. I’m sure there are countless proofs that revised re-releases are nothing new.

To follow up on my points, and Dan’s, here is an interesting excerpt of a new interview with actor Daniel Craig regarding the new James Bond film, which specifically touches upon his feelings for the theatrically released cut of “Blade Runner”:

IGN: The second question is,”What liberties have you tried or wanted to take with the character, but were told to shy away from because it didn’t fit with the character at all or at this point in time?”

Daniel Craig: “I wasn’t allowed. [laughter] … I haven’t been denied anything really … you know, I think one of the things about making a film is ????this is kind of a long roundabout way of answering this question but what makes movies sort of immediate is the restrictions you put upon them. This is an example which is probably a bad example but I love the original cut of Blade Runner with the voice-over. I mean I still love it. I still think it’s great because that was the movie they had to put out at that time because those were the restrictions that were put upon it. And so it has sort of something about it — you know, despite the fact there’s ten others now that are kind of an hour longer than all the others.

That’s kind of what we have when we’re making Bond movies. We are under the tightest of schedules so you’re on the hoof, you’re inventing things as you go. You go, “Oh, what about this?” I mean we can’t change a lot because once things are set and once the ball’s rolling on a movie like this there’s no stopping it so we can’t change action sequences all the time. We do but I mean they’re kind of it’s because when things — it’s not because we suddenly have inspiration, it’s because physically we can’t do something or we have to change an idea. You have to keep thinking and trying to bring these things and say, “Oh, I’ll try this out.” But it’s the restrictions that make it interesting.

It’s a really convoluted way of answering the question. I’m just pushing it back but that for me is sort of the process of filmmaking. It’s about what you can’t do more than what you [can], as opposed to the freedom that you’re given. Often the more freedom you’re given the more kind of meandering a movie becomes and maybe less interesting.”

http://movies.ign.com/articles/911/911930p3.html

I don’t care what anyone says — I liked the happy ending.

I’m not up on the latest *conneries* from our French friends, but here’s a bit of Baudelaire that is *a propos* on more than one level:

“Do we display all the rags, the rouge, the pulleys, the chains, the alterations, the scribbled-over proof sheets, in short all the horrors that make up the sanctuary of art?”

–From _Flowers of Evil_, “Three Drafts of a Preface”

(That quote is even better in context. I urge you all to read the whole essay.)

I looked up Scott on IMDB after seeing an ad for _Body of Lies_ today. Dude’s got SEVEN more movies various stages of production.

Hi Bob. Just found this article a few days ago. I’m astonished and frankly delighted

at the vast amount of analysis and thought you rendered into this endeavor. I just

had one comment to clarify my quotation “There’s (should have said ‘There are’) no computer generated images in the film.”

FOLLOWED BY YOUR OBSERVATION:

(Case in point: Snyder speaks as though computers didn’t exist at the time of Blade Runner. Yet it is only through the airtight registration made possible by motion-control cinematography, dependent on microprocessors for precision and memory storage for repeatability, that the film’s beautiful miniatures blend so smoothly with their surroundings.)

To clarify, there were no images i.e. matte paintings, miniatures & settings that

were computer generated. You are correct in fact, that images shot in Mo-Con

allowed the marriage of, in some instances up to 12 elements. This would not

have been possible in the absence of these components. In closing, let’s just say

say that Ridley’s astonishing moving pictures were ‘computer assisted images’.

This would have never been possible without Doug Trumbell’s EEG wizard team.

I commend you for writing a most intellectual analysis of the art of the film. dls